I don’t know how people can be so easily taken in by a system that has been proven to be wrong about so many things. I got an AI search response just yesterday that dramatically understated an issue by citing an unscientific ideologically based website with high interest and reason to minimize said issue. The actual studies showed a 6x difference. It was blatant AF, and I can’t understand why anyone would rely on such a system for reliable, objective information or responses. I have noted several incorrect AI responses to queries, and people mindlessly citing said response without verifying the data or its source. People gonna get stupider, faster.

That’s why I only use it as a starting point. It spits out “keywords” and a fuzzy gist of what I need, then I can verify or experiment on my own. It’s just a good place to start or a reminder of things you once knew.

An LLM is like taking to a rubber duck on drugs while also being on drugs.

I like to use GPT to create practice tests for certification tests. Even if I give it very specific guidance to double check what it thinks is a correct answer, it will gladly tell me I got questions wrong and I will have to ask it to triple check the right answer, which is what I actually answered.

And in that amount of time it probably would have been just as easy to type up a correct question and answer rather than try to repeatedly corral an AI into checking itself for an answer you already know. Your method works for you because you have the knowledge. The problem lies with people who don’t and will accept and use incorrect output.

Well, it makes me double check my knowledge, which helps me learn to some degree, but it’s not what I’m trying to make happen.

I don’t know how people can be so easily taken in by a system that has been proven to be wrong about so many things

Ahem. Weren’t there an election recently, in some big country, with uncanny similitude with that?

Yeah. Got me there.

I know a few people who are genuinely smart but got so deep into the AI fad that they are now using it almost exclusively.

They seem to be performing well, which is kind of scary, but sometimes they feel like MLM people with how pushy they are about using AI.

Most people don’t seem to understand how “dumb” ai is. And it’s scary when i read shit like that they use ai for advice.

People also don’t realize how incredibly stupid humans can be. I don’t mean that in a judgemental or moral kind of way, I mean that the educational system has failed a lot of people.

There’s some % of people that could use AI for every decision in their lives and the outcome would be the same or better.

That’s even more terrifying IMO.

No, no- not being judgemental and moral is how we got to this point in the first place. Telling someone who is doing something foolish, when they are acting foolishly used to be pretty normal. But after a couple decades of internet white-knighting, correcting or even voicing opposition to obvious stupidity is just too exhausting.

Dunning-Kruger is winning.

I was convinced about 20 years ago that at least 30% of humanity would struggle to pass a basic sentience test.

And it gets worse as they get older.

I have friends and relatives that used to be people. They used to have thoughts and feelings. They had convictions and reasons for those convictions.

Now, I have conversations with some of these people I’ve known for 20 and 30 years and they seem exasperated at the idea of even trying to think about something.

It’s not just complex topics, either. You can ask him what they saw on a recent trip, what they are reading, or how they feel about some show and they look at you like the hospital intake lady from Idiocracy.

There is something I don’t understand… openAI collaborates in research that probes how awful its own product is?

If I believed that they were sincerely interested in trying to improve their product, then that would make sense. You can only improve yourself if you understand how your failings affect others.

I suspect however that Saltman will use it to come up with some superficial bullshit about how their new 6.x model now has a 90% reduction in addiction rates; you can’t measure anything, it’s more about the feel, and that’s why it costs twice as much as any other model.

Correlation does not equal causation.

You have to be a little off to WANT to interact with ChatGPT that much in the first place.

I don’t understand what people even use it for.

Compiling medical documents into one, any thing of that sort, summarizing, compiling, coding issues, it saves a wild amounts of time compiling lab results that a human could do but it would take multitudes longer.

Definitely needs to be cross referenced and fact checked as the image processing or general response aren’t always perfect. It’ll get you 80 to 90 percent of the way there. For me it falls under the solve 20 percent of the problem gets you 80 percent to your goal. It needs a shitload more refinement. It’s a start, and it hasn’t been a straight progress path as nothing is.

I use it to generate a little function in a programming language I don’t know so that I can kickstart what I need to look for.

There’s a few people I know who use it for boilerplate templates for certain documents, who then of course go through it with a fine toothed comb to add relevant context and fix obvious nonsense.

I can only imagine there are others who aren’t as stringent with the output.

Heck, my primary use for a bit was custom text adventure games, but ChatGPT has a few weaknesses in that department (very, very conflict adverse for beating up bad guys, etc.). There’s probably ways to prompt engineer around these limitations, but a) there’s other, better suited AI tools for this use case, b) text adventure was a prolific genre for a bit, and a huge chunk made by actual humans can be found here - ifdb.org, c) real, actual humans still make them (if a little artsier and moody than I’d like most of the time), so eventually I stopped.

Did like the huge flexibility v. the parser available in most made by human text adventures, though.

I use it to make all decisions, including what I will do each day and what I will say to people. I take no responsibility for any of my actions. If someone doesn’t like something I do, too bad. The genius AI knows better, and I only care about what it has to say.

I use it many times a day for coding and solving technical issues. But I don’t recognize what the article talks about at all. There’s nothing affective about my conversations, other than the fact that using typical human expression (like “thank you”) seems to increase the chances of good responses. Which is not surprising since it better matches the patterns that you want to evoke in the training data.

That said, yeah of course I become “addicted” to it and have a harder time coping without it, because it’s part of my workflow just like Google. How well would anybody be able to do things in tech or even life in general without a search engine? ChatGPT is just a refinement of that.

Long story short, people that use it get really used to using it.

Or people who get really used to using it, use it

That’s a cycle sir

New DSM / ICD is dropping with AI dependency. But it’s unreadable because image generation was used for the text.

This is perfect for the billionaires in control, now if you suggest that “hey maybe these AI have developed enough to be sentient and sapient beings (not saying they are now) and probably deserve rights”, they can just label you (and that arguement) mentally ill

Foucault laughs somewhere

What the fuck is vibe coding… Whatever it is I hate it already.

Using AI to hack together code without truly understanding what your doing

Its when you give the wheel to someone less qualified than Jesus: Generative AI

Andrej Karpathy (One of the founders of OpenAI, left OpenAI, worked for Tesla back in 2015-2017, worked for OpenAI a bit more, and is now working on his startup “Eureka Labs - we are building a new kind of school that is AI native”) make a tweet defining the term:

There’s a new kind of coding I call “vibe coding”, where you fully give in to the vibes, embrace exponentials, and forget that the code even exists. It’s possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting too good. Also I just talk to Composer with SuperWhisper so I barely even touch the keyboard. I ask for the dumbest things like “decrease the padding on the sidebar by half” because I’m too lazy to find it. I “Accept All” always, I don’t read the diffs anymore. When I get error messages I just copy paste them in with no comment, usually that fixes it. The code grows beyond my usual comprehension, I’d have to really read through it for a while. Sometimes the LLMs can’t fix a bug so I just work around it or ask for random changes until it goes away. It’s not too bad for throwaway weekend projects, but still quite amusing. I’m building a project or webapp, but it’s not really coding - I just see stuff, say stuff, run stuff, and copy paste stuff, and it mostly works.

People ignore the “It’s not too bad for throwaway weekend projects”, and try to use this style of coding to create “production-grade” code… Lets just say it’s not going well.

source (xcancel link)

The amount of damage a newbie programmer without a tight leash can do to a code base/product is immense. Once something is out in production, that is something you have to deal with forever. That temporary fix they push is going to be still used in a decade and if you break it, now you have to explain to the customer why the thing that’s been working for them for years is gone and what you plan to do to remedy the situation.

A newbie without a leash just pushing whatever an AI hands them into production. O, boy, are senior programmers going to be sad for a long, long time.

Hung

Hunged

Most hung

Hungrambed

deleted by creator

Well TIL thx for the info been using it wrong for years

I know I am but what are you?

People addicted to tech omg who could’ve guessed. Shocked I tell you.

The quote was originally on news and journalists.

I remember thinking this when I was like 15. Every time they mentioned tech, wtf this is all wrong! Then a few other topics, even ones I only knew a little about, so many inaccuracies.

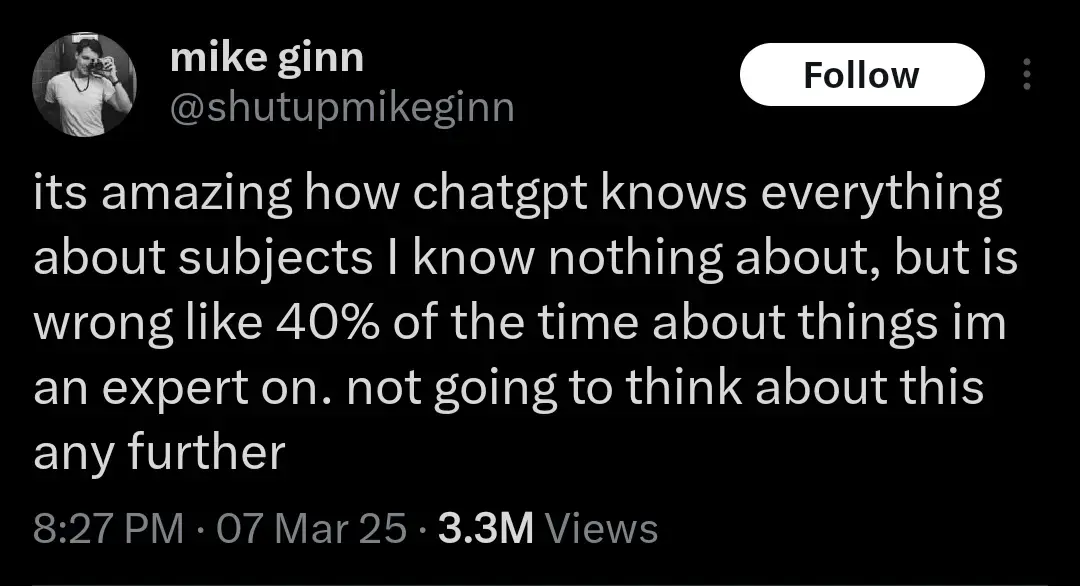

Another realization might be that the humans whose output ChatGPT was trained on were probably already 40% wrong about everything. But let’s not think about that either. AI Bad!

AI Bad!

Yes, it is. But not in, like a moral sense. It’s just not good at doing things.

I’ll bait. Let’s think:

-there are three humans who are 98% right about what they say, and where they know they might be wrong, they indicate it

-

now there is an llm (fuck capitalization, I hate the ways they are shoved everywhere that much) trained on their output

-

now llm is asked about the topic and computes the answer string

By definition that answer string can contain all the probably-wrong things without proper indicators (“might”, “under such and such circumstances” etc)

If you want to say 40% wrong llm means 40% wrong sources, prove me wrong

It’s more up to you to prove that a hypothetical edge case you dreamed up is more likely than what happens in a normal bell curve. Given the size of typical LLM data this seems futile, but if that’s how you want to spend your time, hey knock yourself out.

Lol. Be my guest and knock yourself out, dreaming you know things

-

This is a salient point that’s well worth discussing. We should not be training large language models on any supposedly factual information that people put out. It’s super easy to call out a bad research study and have it retracted. But you can’t just explain to an AI that that study was wrong, you have to completely retrain it every time. Exacerbating this issue is the way that people tend to view large language models as somehow objective describers of reality, because they’re synthetic and emotionless. In truth, an AI holds exactly the same biases as the people who put together the data it was trained on.

Brain bleaching?

Its too bad that some people seem to not comprehend all chatgpt is doing is word prediction. All it knows is which next word fits best based on the words before it. To call it AI is an insult to AI… we used to call OCR AI, now we know better.

LLM is a subset of ML, which is a subset of AI.

And sunshine hurts.

Said the vampire from Transylvania.

I need to read Amusing Ourselves to Death…

My notes on it https://fabien.benetou.fr/ReadingNotes/AmusingOurselvesToDeath

But yes, stop scrolling, read it.

This makes a lot of sense because as we have been seeing over the last decades or so is that digital only socialization isn’t a replacement for in person socialization. Increased social media usage shows increased loneliness not a decrease. It makes sense that something even more fake like ChatGPT would make it worse.

I don’t want to sound like a luddite but overly relying on digital communications for all interactions is a poor substitute for in person interactions. I know I have to prioritize seeing people in the real world because I work from home and spending time on Lemmy during the day doesn’t fulfill.

In person socialization? Is that like VR chat?

Negative IQ points?